Science of Learning

The Measurement Gap in Modern Learning

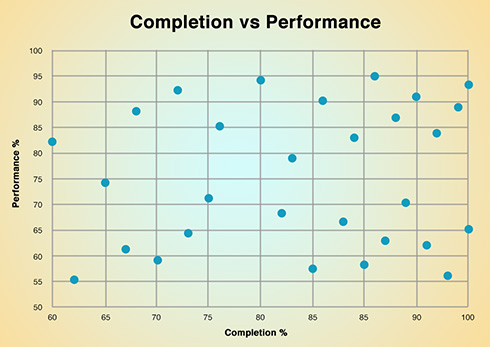

Across education systems and workforce training environments, learning is primarily

measured through static outputs — scores, completion rates, and certifications.

These indicators provide benchmarks and accountability. However, they represent terminal

observations rather than ongoing cognitive states.

Educational research has long recognized that assessment outcomes do not fully capture

the depth, transferability, or durability of knowledge. As noted by John Biggs,

constructive alignment requires that what is assessed meaningfully reflect the learning

processes intended — not merely the observable outputs.

Similarly, large-scale assessment systems often privilege standardization over depth,

creating what scholars describe as a “measurement illusion” — the appearance of

precision without corresponding insight into underlying competence.

As institutions scale — across departments, geographies, and large learner populations —

this limitation becomes more pronounced. Surface-level metrics create visibility into

participation and performance snapshots, but not into learning stability or capability

readiness.

This disconnect constitutes the measurement gap in modern learning.

Learning as an Evolving Process

Learning is not a one-time event concluded at

assessment.

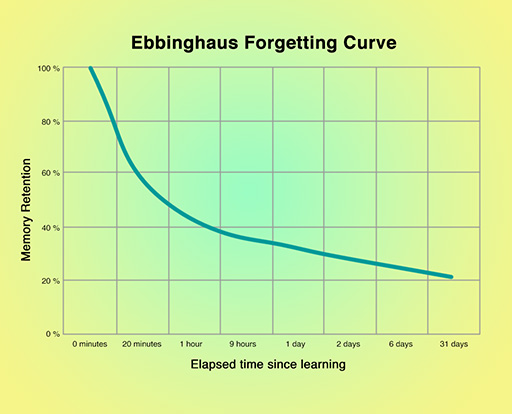

Cognitive science demonstrates that memory and understanding are dynamic. The work of

Hermann

Ebbinghaus on memory decay illustrates that retention changes over time. Later

research in retrieval practice and spaced learning, advanced by scholars such as Robert

Bjork, emphasizes that learning strengthens through reinforcement and desirable

difficulty.

Learning:

- Develops through repeated interaction

- Strengthens with application

- Weakens without reinforcement

- Varies across contexts and cognitive load

Performance in one condition does not guarantee performance in another. Competence is not

merely demonstrated — it is sustained, reinforced, and transferred.

Measurement systems must therefore evolve to reflect learning as an

ongoing,

context-sensitive process rather than a static achievement.

Why This Matters at Scale

In workforce development and institutional education, scale

introduces complexity.

Research in organizational capability and human capital development consistently highlights

that performance reliability depends not only on training completion, but on the stability

and transferability of knowledge in operational contexts.

Without deeper visibility:

- Training ROI remains difficult to evaluate beyond participation metrics

- Skill-readiness cannot be confidently inferred from certification alone

- Performance variability may only become apparent under real-world conditions

- Institutions operate reactively rather than proactively

As capability systems grow larger, uncertainty compounds Improved learning intelligence enables institutions to move from reporting activity to understanding capability. It supports strategic workforce planning, risk-aware training investments, and more resilient performance ecosystems.

Our Research Direction

At Edculcate, our research focuses on building scalable systems that

analyze large-scale learning interactions and translate them into structured

intelligence for institutional decision-making.

Our approach draws from:

- Behavioral data science

- Longitudinal learning research

- Applied cognitive psychology

- Scalable computational systems

Rather than relying solely on terminal assessment outcomes, our work explores how

interaction-level learning data can provide deeper visibility into capability formation over

time.

Our modeling architecture and analytical frameworks are proprietary and under active IP

development. As this research advances, our objective is to contribute meaningfully to the

evolving science of learning measurement at institutional scale.

Selected Research Foundations:

- Ebbinghaus, H. (1885). Memory: A Contribution to Experimental Psychology.

- Bjork, R. A. (1994). Memory and Metamemory Considerations in the Training of Human Beings.

- Biggs, J. (1996). Enhancing Teaching through Constructive Alignment.

- Research on spaced repetition, retrieval practice, and transfer of learning.